(DVO313) Building Next-Generation Applications with Amazon ECS

- 1. © 2015, Amazon Web Services, Inc. or its Affiliates. All rights reserved. Matt DeBergalis @debergalis Cofounder and VP of Product October 2015 DVO313 Building Next-Generation Applications with Amazon ECS

- 2. What to expect from the session • Overview of the connected client application architecture, where rich web + mobile clients maintain persistent network connections to cloud microservices, and Meteor, a JavaScript application platform for building these apps. • Discussion of the unique devops requirements needed to manage connected client apps and microservices. • Reasons for delivering Galaxy, Meteor’s cloud runtime, on Amazon ECS. • Deep dive into our use of Amazon ECS.

- 3. Open source The JavaScript app platform, for mobile and web Open source (MIT) 10th most starred project on GitHub Fully supported Galaxy runtime Deploy, operate, and monitor apps and services Built on Amazon ECS Launched to public on 10/5 Team of over 30, hundreds of OSS contributors $30M+ raised from Andreessen Horowitz, Matrix, others 100+ development and training partners Cloud platform Complete ecosystem

- 4. JavaScriptCLRJVM Meteor: a JavaScript application platform

- 5. Galaxy Proxy App A (dead) Galaxy Server Galaxy Server E C S C L U S T E R E L B Proxy Scheduler A Z 1 A Z 2 App A v2 App A v2 App A v2 App A v2 App A (dead) App A (dead) App A (dead)

- 6. WEBSITES Links and forms Page-based Viewed in a browser APPS Modern UI/UX No refresh button Browser, mobile, and more

- 7. WEBSITES Stateless Request / response Presentation on the wire APPS Stateful Publish / subscribe Data on the wire

- 8. The architecture required for modern apps is different

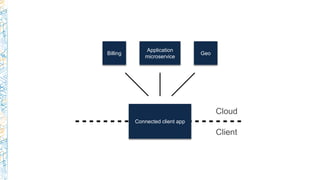

- 10. 1. Stateful clients and servers with persistent network connections. 2. Reactive architecture where data is pushed to clients in real time. 3. Code running on the client and in the cloud. The app spans the network. Connected client

- 12. HTML Templates App Logic Microservices Database Reactive UI update system Native mobile container Speculative client-side updates Client-side data store Custom data sync protocol Realtime database monitoring Build & update system Assemble it yourself Off the shelf Build / integrate Modern app architecture

- 13. HTML Templates App Logic Microservices Database HTML Templates App Logic Microservices Database Reactive UI update system Native mobile container Speculative client-side updates Client-side data store Custom data sync protocol Realtime database monitoring Build & update system Open-source JavaScript app platform Off the shelf Build / integrate Assemble it yourself With Meteor Modern app architecture

- 14. JavaScript is the only reasonable language for cross platform app development

- 15. The architecture required for modern apps is different

- 16. The devops required for modern apps is different

- 17. The devops required for modern apps is more complex

- 18. • Persistent, stateful connections. • Seamless application updates – “hot code push”. • Client tracking and metrics. • Complex array of microservices. • Mobile considerations (builds, push notifications). Connected client devops

- 19. • Scalable multi-tenant: 100k users, 1MM processes, 100M sessions. • Accessible to developers without sophisticated devops background. • Suitable for expert teams and complex apps. • High availability of user apps and the Galaxy infrastructure. • Online updates of all Galaxy components. • Smooth path to customer-managed cloud. • Use off-the-shelf parts wherever possible. Design requirements

- 20. C o n n e c t e d c l i e n t m a n a g e m e n t Application logic and services MetricsHot code deploySession mgmt Container management IaaS resources Web Galaxy: connected client management I n f r a s t r u c t u r e Mobile Device

- 21. • O(100k) independent user processes that need isolation. • Granular, efficient – essential in multi-tenant. • Surprisingly important: fast spin-up. • Speed and responsiveness is an essential part of a great developer experience. • Fast spin-up lets us build around a “single-shot” container model. • Layering as a path to user-supplied binaries. Containers and orchestration

- 22. • Lots of exciting options here: ECS, Kubernetes, Marathon, … • Service argument is compelling. Same case we make for Galaxy to our customers. • Integration with other parts of AWS saves us time and code. Example: services automatically register containers with Elastic Load Balancing (ELB). • Support for multiple Availability Zones. • Bottom line: ECS got us to market faster than the alternatives. ECS container management

- 23. Implementation

- 24. Logs Metrics Galaxy UI App images App state Cluster 1 Manager app app app app Cluster 2 app app app app Cluster 3 app app app app Developer Admin Manager Manager F R O N T E N D B A C K E N D

- 25. Galaxy E C S C L U S T E R A Z 1 A Z 2 App AApp A App AApp A

- 26. Galaxy E C S C L U S T E R E L B A Z 1 A Z 2 App AApp A App AApp A Proxy Proxy

- 27. Galaxy E C S C L U S T E R E L B A Z 1 A Z 2 App AApp A App AApp A App CService BService B Proxy SchedulerProxy SchedulerProxy

- 28. Galaxy Proxy E C S C L U S T E R E L B Proxy A Z 1 A Z 2 App AApp A App AApp A Scheduler App CService BService B Galaxy UI Galaxy UI

- 29. Deeper Dive Custom scheduler Connected client proxy User metrics

- 30. • Need fine-grained control over how individual tasks are allocated to container instances and across Availability Zones. • Container health depends on high-level behavior of app processes, not just low-level checks. • Need rate limits and backoff policy when restarting application containers. (Not our code; potentially not the same policy for all users.) • Users need visibility into container health. • Need to ensure that system-essential containers (proxy, Galaxy UI) can be scheduled even if resources are over-committed. Scheduling containers

- 31. ECS default scheduler not designed to do these kinds of things. That’s okay! Instead, ECS provides cluster state and task management APIs needed to write our own. ~1,500 lines of Go. • High availability app containers must be distributed across Availability Zones. • App containers should be evenly distributed across instances in an Availability Zone. • Container instances should be roughly equally loaded. • Each container instance must have space to run a proxy and a scheduler. Also implements rate-limiting, application health checks, and coordinated version updates. Writing a custom scheduler

- 32. Logs Metrics Galaxy UI App images App state Cluster 1 Scheduler app app app app Cluster 2 app app app app Cluster 3 app app app app Developer Admin Scheduler Scheduler F R O N T E N D B A C K E N D State sync

- 33. policies scheduler ECS APIApp state Desired config <app,version,containers,HA> StartTask StopTask ListTasks DescribeTasks Actual config [<container status,exit code>] State sync

- 34. • To ensure the scheduler stays alive, we create an ECS service calling for exactly 1 scheduler task. • If the scheduler goes down, crashed containers will no longer be restarted, and users won't be able to launch new containers or stop old ones. Reasonable failure mode. • We’re considering changing to a “keep <n> running” model, using Amazon DynamoDB to broker a leadership election between the set. Scheduling the scheduler

- 35. • Manages the persisent connection between clients and the appropriate application backend / microservice process. • Implements stable sessions + coordinated version updates. • Share nothing architecture. Any proxy can serve any request. • High availability: multiple proxies in multiple Availability Zones. • Scheduled as an ECS service; binds to ELB. Connected client proxy

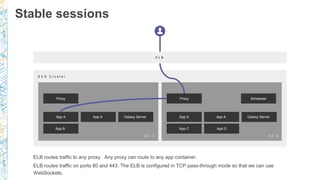

- 36. Proxy App A Galaxy ServerApp A App A App A Galaxy Server E C S C l u s t e r E L B Proxy App B App C App D Scheduler ELB routes traffic to any proxy. Any proxy can route to any app container. ELB routes traffic on ports 80 and 443. The ELB is configured in TCP pass-through mode so that we can use WebSockets. A Z 1 A Z 2 Stable sessions

- 37. Proxy App A Galaxy ServerApp A App A App A Galaxy Server E C S C l u s t e r E L B Proxy App B App C App D Scheduler A Z 1 A Z 2 Proxy routes initial request to random container, and applies a cookie to the client with the ID of the selected container. On subsequent connections (XHR or interrupted WebSocket), proxy uses cookie to determine backend. Stable sessions

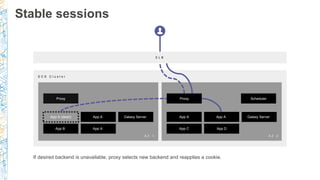

- 38. Proxy Galaxy ServerApp A App A App A Galaxy Server E C S C l u s t e r E L B Proxy App B App C App D Scheduler A Z 1 A Z 2 If desired backend is unavailable, proxy selects new backend and reapplies a cookie. App A (dead) App A Stable sessions

- 39. Proxy App A v1 Galaxy Server App A v1 App A v1 App A v1 Galaxy Server E C S C l u s t e r E L B Proxy Scheduler A Z 1 A Z 2 v1 App updates require the cooperation of the scheduler and proxy components. Coordinated version updates

- 40. Proxy App A v1 Galaxy Server App A v1 App A v1 App A v1 Galaxy Server E C S C l u s t e r E L B Proxy Scheduler A Z 1 A Z 2 App A v2 App A v2 App A v2 App A v2 v1 First step is to spin up new containers in parallel with the old. (This can be done in a rolling fashion, not shown here.) Coordinated version updates

- 41. Proxy App A (dead) Galaxy Server Galaxy Server E C S C l u s t e r E L B Proxy Scheduler A Z 1 A Z 2 App A v2 App A v2 App A v2 App A v2 App A (dead) App A v1 App A v1 v1 Once new containers pass health checks, scheduler starts to tear down old client connections and the old containers. Coordinated version updates

- 42. Proxy App A (dead) Galaxy Server Galaxy Server E C S C l u s t e r E L B Proxy Scheduler A Z 1 A Z 2 App A v2 App A v2 App A v2 App A v2 App A (dead) App A (dead) App A (dead) v1 v2 Proxy recognizes code update in progress, ignores session cookie, and routes client to new container (establishing new stable session). Coordinated version updates

- 43. • Galaxy collects metrics on CPU, memory, network traffic, and a count of connected clients from each running app. • collector process (one per container instance) streams container metrics via Docker Remote API, and poll proxy metrics on a known port. • aggregator process (singleton) polls each collector, computes aggregate rollups (hourly, daily), stores each time series in DynamoDB. • Aggregator expires old metrics. Tables are sharded by time range. • Galaxy server reads directly from DynamoDB. Metrics

- 44. With the amount of growth we have seen after our launch last year, keeping the servers alive has been an uphill battle until Galaxy came along. – Tigran Sloyan, Codefights Galaxy … solved many of the ongoing challenges we had with our previous server stack: load balancing across sticky sessions, scaling processes, etc. – Shawn Young, Classcraft Loosely coupled architecture working well for us High availability strategy works: apps stayed up during IaaS outages Our experience so far

- 45. Multiple clusters Additional AWS regions On-prem (customer-supplied IAM credentials) Free tier and instance cost optimizations … and more … What’s next

- 46. The JavaScript app platform www.meteor.com Galaxy available now!

- 47. Thank you! Matt DeBergalis – @debergalis

- 48. Remember to complete your evaluations!

![policies

scheduler

ECS

APIApp state

Desired config

<app,version,containers,HA>

StartTask

StopTask

ListTasks

DescribeTasks

Actual config

[<container status,exit code>]

State sync](https://image.slidesharecdn.com/dvo313-151008032953-lva1-app6891/85/DVO313-Building-Next-Generation-Applications-with-Amazon-ECS-33-320.jpg)