-

Software

- UL Procyon benchmark suite

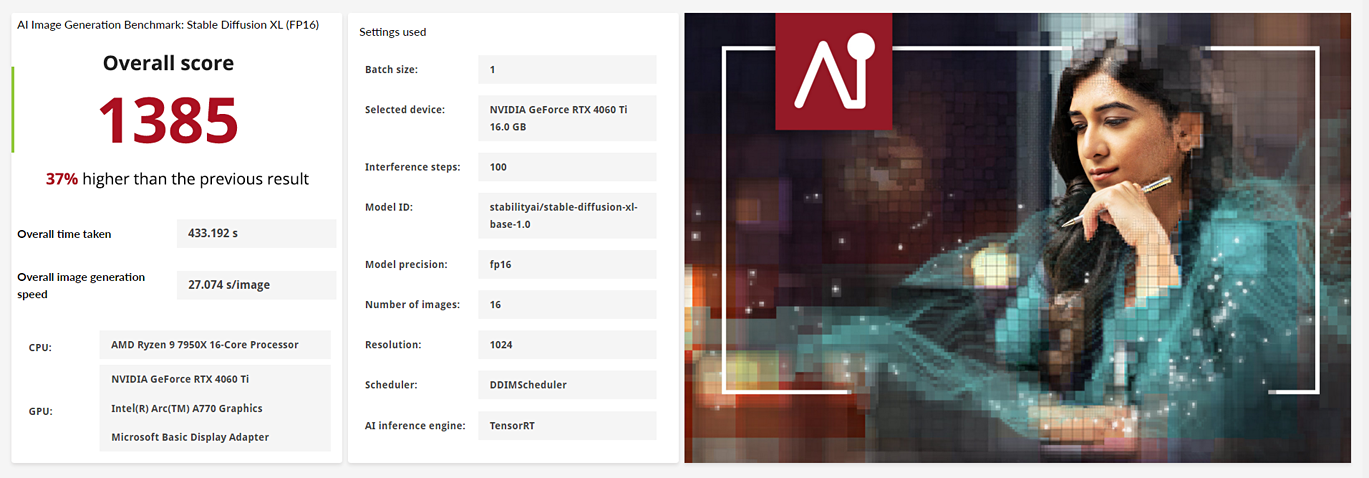

- AI Image Generation Benchmark

- AI Inference Benchmark for Android

- AI Computer Vision Benchmark

- Battery Life Benchmark

- One-Hour Battery Consumption Benchmark

- Office Productivity Benchmark

- Photo Editing Benchmark

- Video Editing Benchmark

- Testdriver

- 3DMark

- 3DMark for Android

- 3DMark for iOS

- PCMark 10

- PCMark for Android

- VRMark

- MORE...

-

Services

-

Support

-

Insights

-

Compare

-

More

-