Replies: 42 comments 4 replies

-

|

If you read README further...

|

Beta Was this translation helpful? Give feedback.

-

|

Okay, so taking the source you gave me (entirely disregarding my own test), I can read the following:

Which is in very stark contrast with the README:

https://www.collinsdictionary.com/dictionary/english/on-a-par-with 2.2% is not "on par with" 12%. It's like comparing wine with light beer - they are entirely disjoint, you cannot possibly claim light beer gets you as hammered as wine? And the golang thing.... jeeeez! |

Beta Was this translation helpful? Give feedback.

-

|

@alexhultman Your point might be valid, but I think you might be oversimplifying your tests here. Benchmarks are a tricky thing, but it's important to know what is it that you are comparing. FastApi is a Web application framework that provides quite a bit over just an application server. So if you are comparing FastAPI, say, to NodeJs, then the test should be done over a Web Application Framework as NestJS or similar. Same thing with Golang. the comparison should be against Revel or something like this. In @tiangolo's documentation on benchmarks you can read:

https://fastapi.tiangolo.com/benchmarks/ I believe that when the developers say:

They mean a full application on Golang or NodeJS (on some framework) vs a Full application on FastAPI. |

Beta Was this translation helpful? Give feedback.

-

|

I must agree with @alexhultman that the performance claims are misleading ...I learned it the hard way too. Performance is not really what it claims to be. Taking another example, the one just serving a chunk of text:

To boldly state as the first feature "Fast: Very high performance, on par with NodeJS and Go" is well... I guess I don't have to say it. ...It leads to disappointments down the road when you discover the truth. Probably it would be better to just keep "Among the fastest Python frameworks available" and emphasize on the other good features. |

Beta Was this translation helpful? Give feedback.

-

|

There seem to be two intertwined discussions here that I think we can address separately. The NodeJS and Go comparisonThere is definitely contention around the phrase "on par with NodeJS and Go" in the documentation. I believe the purpose of that phrase was to be encouraging so that people will try out the framework for their purpose instead of just assuming "it's Python, it'll be too slow". However, clearly the phrase can also spawn anger and be off-putting which would be the opposite of what we're trying to achieve here. I believe if the comparison is causing bad feelings toward FastAPI that it should simply be removed. We can claim FastAPI is fast without specifically calling out other languages (which almost always leads to defensiveness). Obviously this is up to @tiangolo and we'll need his input here when he gets to this issue. FastAPI's PerformanceIf you ask "is it fast" about anything, there will be evidence both for and against. I think the point of linking to TechEmpower instead of listing numbers directly is so that people can explore on their own and see if FastAPI makes sense for their workloads. However, we may be able to do a better job of guiding people about what is "fast" about FastAPI. For the numbers I'm about to share, I'm using TechEmpowers "Round 19" looking at only the "micro" classification (which is what FastAPI falls under) for "go", "javascript", "typescript", and "python". I don't use Go or NodeJS in production, so I'm picking some popular frameworks which appear under this micro category to compare: "ExpressJS" (javascript), "NestJS" (typescript), and "Gin" (golang). I don't know how their feature sets compare to FastAPI. Plain TextI believe this is what most of the comparisons above me are using. FastAPI is much slower than nest/express which is much slower than Gin. Exactly what people are saying above. If your primary workload is serving text, go with Go. Data UpdatesRequests must fetch data from a database, update, and commit it back, then serialize and return the result to the caller. Here FastAPI is much faster than NestJS/Express which are much faster than Gin. FortunesThis test uses an ORM and HTML templating. Here all the frameworks are very close to each other but, in order from fastest to slowest, were Gin, NestJS, FastAPI, Express. Multiple QueriesThis is just fetching multiple rows from the database and serializing the results. Here, FastAPI slightly edges out Gin. Express and NestJS are much slower in this test. Single querySingle row is fetched and serialized. Gin is much faster than the rest which are, in order, FastAPI, NestJS, and Express. JSON serializationNo database activity, just serializing some JSON. Gin blows away the competition. Express, then Nest, then FastAPI follow. So the general theme of all the tests combined seems to be if you're working with large amounts of data from the database, FastAPI is the fastest of the bunch. The less database activity (I/O bound), the further FastAPI falls and Gin rises. The real takeaway here is that the answer to "is it fast" is always "it depends". However, we can probably do a better job of pointing out FastAPI's database strengths in the sections talking about speed. |

Beta Was this translation helpful? Give feedback.

-

Here is a short lesson in critical thinking:

But yes, I guess we should attribute this victory to FastAPI. Because the fact it used PostgreSQL in a test that clearly favors PostgreSQL has nothing at all to do with the outcome. Nothing at all 🎶 😉 And the fact FastAPI scores last in every single test that does not involve the variability of database selection, that is just random coincidence. 🎵 🎹 |

Beta Was this translation helpful? Give feedback.

-

|

@alexhultman If you are not happy about the different DB choices of TechEmpower, you can probably raise an issue there (e.g. TechEmpower/FrameworkBenchmarks#2845 - that repo is open to contributions), or pick another comprehensive benchmark you prefer, which we can all benefit from when choosing a framework. Also please be reminded that so far everyone replying to you in this thread is community member only; we are not maintainers of fastapi. If you want to know who wrote that claim, please use git blame. Please be kind to people who are trying to have a discussion here. |

Beta Was this translation helpful? Give feedback.

-

|

I think this issue has gone as deep as it goes already, nothing can be said that hasn't already been. Alright, thank you and have a nice day. |

Beta Was this translation helpful? Give feedback.

-

|

I just ran all-defaults comparison FastAPI vs ExpressJS: I love the syntax and ease of use of FastAPI, but it's disappointing to see misleading claims about its speed. 367kb/s is NOT "on par" with 1620kb/s. that's 400% higher throughput than "Fast"Api but it is about twice as fast as Flask: |

Beta Was this translation helpful? Give feedback.

-

|

How are you running fastapi to ensure that your benchmark is valid? |

Beta Was this translation helpful? Give feedback.

-

|

Beta Was this translation helpful? Give feedback.

-

|

I don't know how you did the benchmarks, but from TechEmpower benchmarks, this is the result. In a real world scenario like Data Updates, 1-20 Queries, etc. FastAPI is much faster.

|

Beta Was this translation helpful? Give feedback.

-

i did exactly what @alexhultman did. created a "hello world" application in both fastapi and expressjs - using all defaults. I didn't optimize anything. then ran I also ran a bare uvicorn server (with hello world app): and already it's slower than expressjs |

Beta Was this translation helpful? Give feedback.

-

|

@andreixk please check the benchmarks that i sent, if you think it is inaccurate please open an issue in TechEmpower's GitHub Repository |

Beta Was this translation helpful? Give feedback.

-

|

@ycd you missing https://deno.land/ |

Beta Was this translation helpful? Give feedback.

-

|

Not directly related to this topic but I'm really interested to hear about your experiences. When comparing Starlette with Express I faced the same surprise. However, by simply passing |

Beta Was this translation helpful? Give feedback.

-

|

Just a different point of view on the performance-between-languages "issue": Especially for web development, I prefer to have a prototype up and running, all the business (or "fun") logic implemented, and all of that implemented through delightful-to-read code. Then I try scaling (Docker & Kubernetes) or improving bottlenecks (Celery & RabbitMQ) or even implementing with as minimal a codebase as possible, using microservices written in other languages. This approach is preferable to spending months in any other language (as a basis) that has overly verbose syntax, libraries that are more often than not unmaintained or injected with malware, immature or unpromising tooling, and whole communities that are confused, unhelpful, or disoriented with "performance," "super-secure," or "we-are-the-future" complexes. At the end of the day, a couple of containers with Python (any framework) at the backend and Node (any framework) for the client will do the trick for performance as well. P.S. But yeah, the official claim is overblown and over-simplistic. It's a shame for such a noteworthy and well-composed framework. |

Beta Was this translation helpful? Give feedback.

-

|

I guess the mystery is solved (kind of)! FastAPI is even faster than NodeJS (even with a single worker). You just need to make the method Here is the Here is the version without @app.get("/test")

def test():

return {"message": "Hello World"}Result: Requests per second: 2596.19 [#/sec] (mean) And with @app.get("/test")

async def test():

return {"message": "Hello World"}Result: Requests per second: 4902.09 [#/sec] (mean) Express: const app = express()

const port = 3000

app.get('/', (req, res) => {

res.json({'ok': true})

})Result: Requests per second: 4545.43 [#/sec] (mean) All the above tests are done with a single worker. As a side note, just by adding This is unbelievable! Did I miss anything? |

Beta Was this translation helpful? Give feedback.

-

multiprocessing performs faster especially using 4 workers |

Beta Was this translation helpful? Give feedback.

-

Right, just edited my comment so that my side note about the workers wouldn't cause any confusion. All tests are performed using a single worker (to make it easier to compare with NodeJS. I know NodeJS can run in multi-process mode but needs some code changes as far as I know). And you're right 4 workers gave me 8759.63 [#/sec] 🤔 It's interesting given I have more than 4 cores. |

Beta Was this translation helpful? Give feedback.

-

|

I acknowledge that this thread is closed however, I wanted to add some extra information to aid in this comparison. An important detail I think a lot of these benchmarks miss is proper configuration of your libraries when using FastAPI. So without trying to sound too overly opinionated here are a couple things that I hope provide a better comparison...

Okay onto the benchmarks... PythonInstall the libraries pip install numpy fastapi 'uvicorn[standard]' orjson# app.py

from fastapi import FastAPI

from fastapi.responses import ORJSONResponse

import numpy as np

app = FastAPI(default_response_class=ORJSONResponse)

@app.get("/json")

async def json_test():

return {"message": "Hello World"}

@app.get("/async/math/numpy")

async def async_math_test(length: int = 1000):

return {"sum": int(np.sum(np.arange(1, length + 1) ** 2))}

@app.get("/sync/math/numpy")

def sync_math_test(length: int = 1000):

return {"sum": int(np.sum(np.arange(1, length + 1) ** 2))}

@app.get("/async/math/python")

async def async_math_test(length: int = 1000):

return {"sum": sum((i ** 2 for i in range(1, length + 1)))}

@app.get("/sync/math/python")

def sync_math_test(length: int = 1000):

return {"sum": sum((i ** 2 for i in range(1, length + 1)))}Run the app Express/NodeJSInstall the libraries yarn add express lodashNote that I used Typescript so you will want to add the // app.ts

import express from 'express';

import _ from 'lodash';

const app = express()

const port = 3000

app.get('/json', (req, res) => {

res.send({message: 'Hello World'})

})

app.get('/math', (req, res) => {

const length = req.query.length ? parseInt(req.query.length as string) : 1000

res.send({sum: _.reduce(_.range(0, length), (p, c) => p + c ** 2)})

})

app.listen(port, () => {

console.log(`Example app listening on port ${port}`)

})Run the app ResultsJSONPython ❯ wrk -c 128 -t 20 'http://localhost:8000/json'

Running 10s test @ http://localhost:8000/json

20 threads and 128 connections

Thread Stats Avg Stdev Max +/- Stdev

Latency 4.39ms 1.85ms 21.03ms 72.43%

Req/Sec 0.94k 159.70 1.81k 69.33%

187586 requests in 10.10s, 26.83MB read

Requests/sec: 18570.58

Transfer/sec: 2.66MBExpress/NodeJS ❯ wrk -c 128 -t 20 'http://localhost:3000/json'

Running 10s test @ http://localhost:3000/json

20 threads and 128 connections

Thread Stats Avg Stdev Max +/- Stdev

Latency 5.85ms 1.03ms 26.99ms 90.99%

Req/Sec 1.03k 85.65 1.57k 91.35%

205779 requests in 10.02s, 51.02MB read

Requests/sec: 20531.14

Transfer/sec: 5.09MBMathWhen performing mathematical operations we have a few options... With python it is popular to use

DatabaseDidn't so anything here but, I have seen that performance of |

Beta Was this translation helpful? Give feedback.

-

|

btw nice article https://www.travisluong.com/fastapi-vs-fastify-vs-spring-boot-vs-gin-benchmark/ |

Beta Was this translation helpful? Give feedback.

-

Can any one point to me why using "async" makes fastapi faster in this case? |

Beta Was this translation helpful? Give feedback.

-

|

@introom if using 'async', router will use |

Beta Was this translation helpful? Give feedback.

-

|

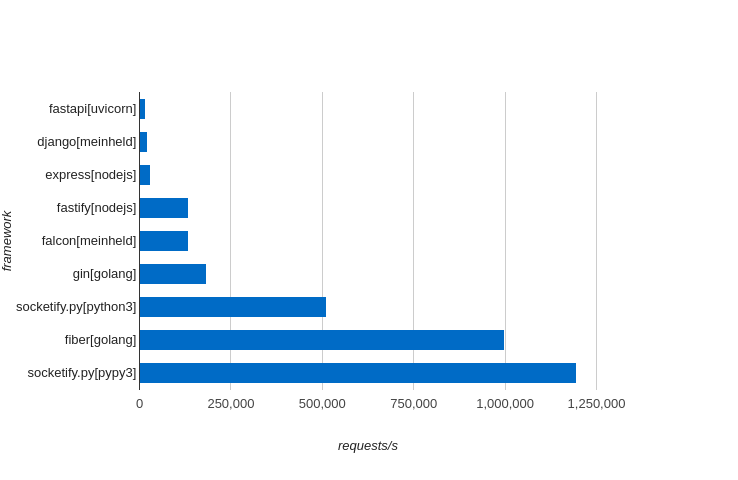

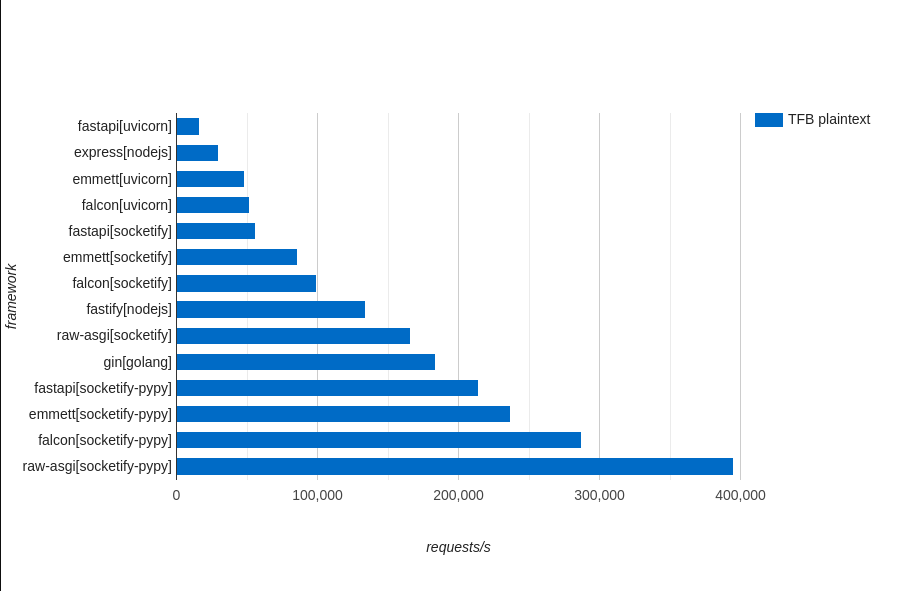

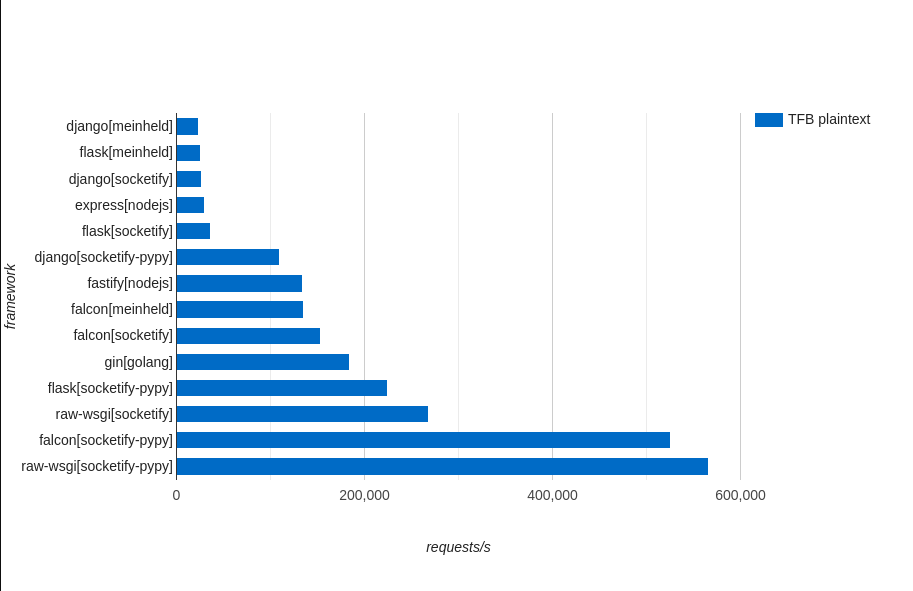

I saw people pointing out database tests. This is important because you will use DBs etc, but it isn't testing the WebFramework itself! It is comparing node.js pg vs python asyncpg or node.js json vs python json/orjson/ujson etc. FastAPI is not a server, so you can only measure the overhead over raw ASGI and measure the ASGI Server performance. For measuring overhead, TechEmPower plaintext is actually a cool tool. so let's do it! First let's see how some frameworks with common (uvicorn and meinheld) servers in general, are compared. So in raw throughput gunicorn+uvicorn is not doing great, and fastiapi using it will not be able to catch up with go frameworks like Gin or Fiber, or even with express and fastify node.js frameworks. Only socketify.py with PyPy pass the throughput of Fiber in this case. So I made an ASGI server (still in development) and WSGI server using socketify (aka uWS C++) as base. So testing ASGI servers and WebFrameworks, Emmett and Falcon using uvicorn are faster than FastAPI, and faster than node.js express, remember node.js express is really slow. Using socketify ASGI FastAPI is able to catch up with Emmett and Falcon but If Emmett and Falcon uses socketify ASGI too, they will be faster of course. But still not at the same level of performance as Fastify in node.js for example. The raw ASGI test of socketify with CPython is faster than Fastify but the overhead of WebFrameworks just put the performance down, but even raw-asgi in CPython is not able to catch up with Gin golang framework (with is not fast for golang standards) Using the same server as the base you can see that Falcon has less overhead than Emmett and Emmett has less overhead than FastAPI. Socketify.py is not optimized for CPython yet but is optimized for PyPy, and you can see that using PyPy FastAPI, Emmett and Falcon passes Gin, but not even close to Fiber performance! That's because the ASGI server it self is slower than Fiber! So the claim:

Is just nonsense, because FastAPI is not a Server, Uvicorn Server is slower than node.js express, fastify, gin, and fiber SERVERS! Socketify.py ASGI Server is faster than express, fastify, gin servers but slower than Fiber server. The question must always be using X server with FastAPI overhead on top, can it be on par with NodeJS and Go? ASGI just has a lot of overhead! and on top of it, FastAPI has a lot of overhead too! You can claim that Falcon/Emmett is faster than FastAPI, and you can also claim that socketify.py is faster than some node.js servers and golang servers, but can not claim that FastAPI is faster than node.js or go because is comparing apples to oranges. Can FastAPI claim be fast? Yes! but need to be compared with WebFrameworks, not Servers! Can FastAPI claim to be the fastest Python WebFramework in all scenarios? No, because others WebFrameworks like Emmett and Falcon have less overhead. ASGI WebFrameworks in general needs to reduce overhead, most of the overhead is dynamic allocations and the asyncio event loop itself, and it can be mitigated a LOT using Factories to reuse some Tasks/Futures and Request/Response objects in general instead of GC them every time, and using PyPy to be able to use stack allocations. Raw throughput is very important for any big application because most production codes should use in-memory caching for responses. That's why with socketify.py you can use sync route to check your in-memory cache and use You can claim:

It's not "on pair" because it's faster with PyPy or slower with CPython. If you want to see some WSGI numbers to compare overhead here is a chart: WSGI has less overhead because it's not using asyncio and because is not doing a lot of unnecessary dynamic allocations, in fact, Socketify.py WSGI does more coping and work than ASGI because it uses ASGI native API and covert the headers before calling the app itself, most of the slowdown is asyncio event loop overhead. Django is just slow, so slow that even with socketify it can't be faster than express, and only when PyPy optimizes and reduces the overhead of Django that it can be faster than express. Falcon and Flask can be faster than Gin with PyPy too, but take a closer look, Falcon + WSGI have really little overhead over raw-wsgi, falcon + ASGI have a way bigger overhead over raw-asgi. Of course, it is not faster than fasthttp or fiber golang, but at least you can say that is faster than SOME golang frameworks. And remember PyPy it's not only for compute-heavy workloads, but is also GREAT to remove unnecessary overhead. socketify.py project: https://github.com/cirospaciari/socketify.py |

Beta Was this translation helpful? Give feedback.

-

|

Ya'll need to add more workers using the |

Beta Was this translation helpful? Give feedback.

-

|

@gnat throwing |

Beta Was this translation helpful? Give feedback.

-

|

I am outraged at @tiangolo 's deceptive marketing practices! Let's file a class action lawsuit and make him pay us back all the money we... oh, wait. This conversation is asinine. @tiangolo 's various websites are a treasure trove of valuable information and educational resources. They are a gift to humanity and more valuable than any college textbook you can buy, and all for the low, low price of eternal gratitude. In the most recent TechEmpower benchmarks, Gin, Fastify and FastAPI are all in the same ballpark, which more than justifies the "on par" claim, but I'd be curious to hear from somebody who has used all 160 of them including the top 3 which are all written in Rust. I like how TechEmpower color codes by the first letter of the language, so Python, Perl, Pascal and PHP are all grouped together. So are C, C#, C++ and Clojure. Fun fact: the "Go" programming language is actually pronounced "Joe". That's why TechEmpower assigns it the same color as Java and JavaScript. Prove me wrong. |

Beta Was this translation helpful? Give feedback.

-

|

It doesn't seem all that productive to compare the Node/HTTP module to a batteries included framework like FastAPI/others. Even comparing out of the box Express to something like FastAPI does not seem useful. The Node/HTTP module is just a thin wrapper of the runtime itself and Express is just a thin API on top of the HTTP module that adds routing/error handling/and a middleware pipeline. Express is not really interesting out of the box unless the goal of your new app is serving up static hello world responses at an extremely high rate. Express only begins becoming useful for most once you add quite a few modules to account for the needs of typical app. The Node/HTTP module is more comparable to Python ASGI servers and Express to other bare bone framework/toolkits (e.g. Starlette) to get a more comparable view. A better comparison for FastAPI would be something like NestJS, Express with applicable modules installed, or other full featured frameworks that target the same problem space. The Node based frameworks may still outperform FastAPI but it will at least be a more reasonable comparison. |

Beta Was this translation helpful? Give feedback.

-

|

I was about to start my learning journey on Fast API, and somehow ended up here. Wow, I just went through the entire discussion and got overwhelmed by how a proper professional technical discussion happens. For anyone who is starting their journey to learn any framework, I would highly recommend this discussion thread. |

Beta Was this translation helpful? Give feedback.

-

I wanted to check the temperature of this project and so I ran a quick, very simple, benchmark with

wrkand the default example:Everything default with wrk, regular Ubuntu Linux, Python 3.8.2, latest FastAPI as of now.

Uvicorn with logging disabled (obviously), as per the README:

I get very poor performance, way worse than Node.js and really, really far from Golang:

This machine can do 400k req/sec on one single thread using other software, so 5k is not at all fast. Even Node.js does 20-30k on this machine, so this does not align at all with the README:

Where do you post benchmarks? How did you come to that conclusion? I cannot see you have posted any benchmarks at all?

Please fix marketing, it is not at all true.

Beta Was this translation helpful? Give feedback.

All reactions